How Contour Measuring Machines Improve Quality Control in Precision Manufacturing

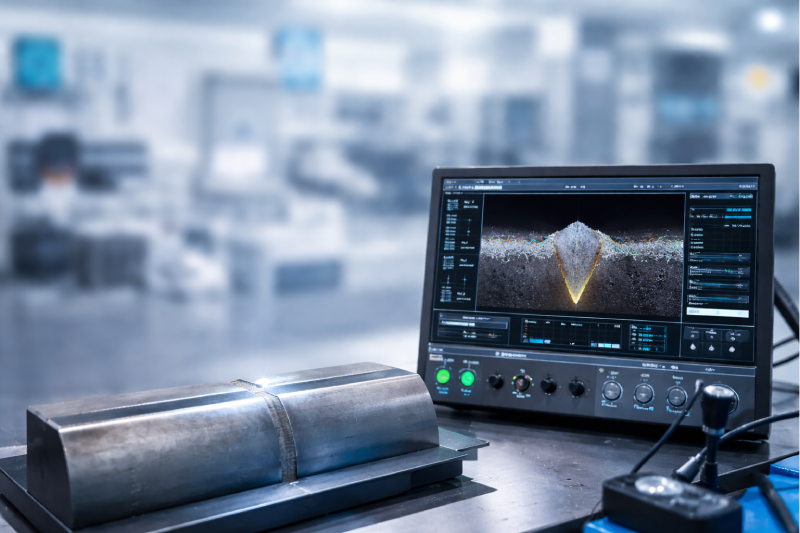

In today’s precision-driven manufacturing world, quality control isn’t just a checkpoint — it’s the foundation of every successful production process. Companies in automotive, aerospace, medical devices, and tooling industries demand parts that not only meet design specifications but also perform with consistency and reliability. At the heart of ensuring this precision is a vital tool: the contour measurement machine. This advanced instrument plays a key role in modern metrology by capturing the exact shape and profile of complex surfaces, making it indispensable for quality assurance in precision manufacturing. What Is a Contour Measuring Machine? A contour machine is a specialized metrology instrument designed to trace and record the surface profile of manufactured parts with exceptional accuracy. Unlike traditional measurement tools that may only capture basic dimensions, contour measurement machines dig deeper — measuring every curve, slope, radius, and edge with fine precision. Whether it’s a tiny gear tooth or a mould cavity with intricate curves, these machines ensure that the profile of every component adheres to its design footprint. At its core, a contour measurement machine works by either physically tracing the surface (contact methods) or using light/lasers (non-contact methods) to map detailed surface geometry. Advanced systems employ high-resolution sensors, magnetic linear drives, and software analysis to generate data that is both highly accurate and repeatable. Why Quality Control Matters in Precision Manufacturing? In precision manufacturing, tolerances are tight. Even the smallest deviation can lead to part malfunction, costly rework, or product failure once assembled. Traditional measurement methods often fall short when definitions of quality involve complex geometry or micro-level surface forms. This is where the contour machining and measurement process shines. By providing detailed profiles of parts directly from production lines or inspection labs, contour measurement machines enable engineers and quality teams to: Detect deviations before products leave the workshop Validate machining accuracy in real time Support statistical process control (SPC) Reduce scrap and rework Provide traceable documentation for quality standards With the right instruments and measurement strategy, manufacturers can boost confidence in every component they produce. Key Ways Contour Measuring Machines Enhance Quality Control Detecting Even the Smallest Deviations A contour measurement machine is designed to capture minuscule differences in surface shape. Whether monitoring flank profiles on gears, edge radii on aerospace parts, or surface features on medical components, these machines ensure every aspect of a part adheres to its prescribed geometry. Even tiny deviations — invisible to the naked eye — can have significant impact on part performance. High-precision measurement ensures these issues are caught early. By tracing profiles with sensitive probes or high-resolution sensors, contour machines provide detailed data that can be used to calculate geometric deviations — such as flatness, roundness, or contour irregularities — with extreme accuracy. Supporting Continuous Monitoring in Production Quality control isn’t a one-time event — it’s a continuous commitment. Modern contour machines are designed to integrate seamlessly into production workflows. With features like automated scanning routines and real-time data feedback, teams can inspect samples at regular intervals to confirm ongoing process stability. In high-volume environments, automated contour measurement machines provide rapid, repeatable results that help identify trends or shifts in machining accuracy. When a deviation is detected, adjustments can be made immediately to tooling, fixtures, or machining parameters — reducing waste and avoiding larger production issues down the line. Elevating Precision in Contour Machining Processes Contour machining — the process of shaping parts to achieve precise 3D contours — benefits directly from insights gained through contour measurement machines. When engineers receive accurate profile data from a contour machine, they can validate whether the tooling and machining paths are producing the exact shapes intended in the design. This cross-verification bridges the gap between design intent and manufactured reality. The feedback loop created by contour measurement enhances machining accuracy over time, enabling teams to refine cutting strategies, optimize tool paths, and improve machine setup. These improvements not only increase product quality but also maximize productivity and reduce cycle times. Enabling Better Decision-Making with Insightful Data One of the greatest strengths of contour measurement machines is the wealth of data they generate. Measurements aren’t just numbers; they represent actionable insights. Quality engineers can use this data to: Build statistical models for process control Predict when tools might wear beyond acceptable limits Benchmark performance across machines or production lines Comply with industry standards and documentation requirements Because contour machines capture detailed profiles, manufacturers gain a deeper understanding of their processes — enabling smarter decisions that elevate overall quality and consistency. Reducing Cost and Risk At first glance, investing in high-precision metrology equipment may seem costly. However, when compared to the cost of scrapped parts, customer returns, warranty claims, and lost reputation, the value becomes clear. Contour measurement machines help manufacturers avoid expensive mistakes by ensuring parts are correct — the first time and every time. Additionally, strong quality control reduces the risk of product failure in the field — a crucial consideration for safety-critical industries like aerospace and medical devices. The assurance provided by precise contour measurement can make all the difference between a successful product launch and costly recalls. Precision Starts with Accurate Measurement Quality control in precision manufacturing is no longer optional — it’s a strategic imperative. By leveraging advanced contour machines and measurement techniques, manufacturers can confidently deliver parts that meet stringent specifications, improve operational efficiency, and maintain competitiveness in a demanding market. Whether you’re producing complex automotive components, aerospace assemblies, or precision-engineered tools, a contour measurement machine is an essential part of your quality arsenal. When quality is non-negotiable, the right measurement technology ensures every part meets the mark — and every customer walks away satisfied. Explore Contour Measuring Machines